On Knowledge, Credentialism, and the 500,000 Word Threshold

You Don’t Know AI Until You’ve Worked With It. And Working With It Means Something Specific.

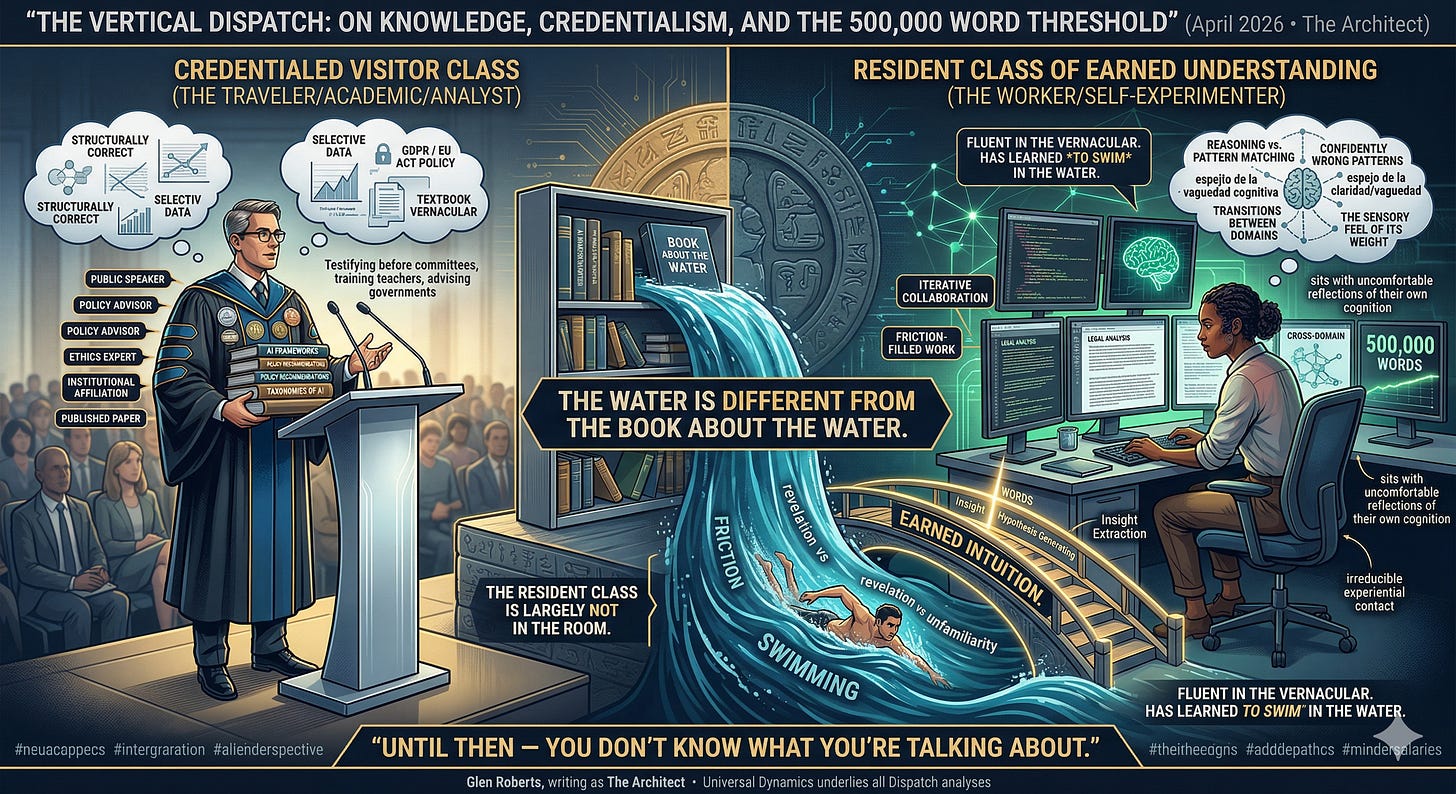

There is a class of expert that every major technological disruption produces on schedule. They arrive within eighteen months of the thing becoming culturally visible. They have the credentials — the institutional affiliation, the published paper, the conference invitation, the media appearance. They speak with the fluency of people who have read everything written about the subject. They produce frameworks, taxonomies, ethical guidelines, policy recommendations, and think-pieces of considerable sophistication.

They have not done the work.

Not the actual work. Not the ten thousand hours of iterative, cross-domain, friction-filled, hypothesis-generating, result-interrogating, failure-absorbing, insight-extracting collaboration with the tool itself that produces the thing that credentials cannot produce and that no amount of reading about can substitute for: earned intuition.

We are drowning in AI commentary.

We are starving for AI understanding.

Let me be precise about what I mean, because precision is what separates this argument from mere credentialism-bashing, which is its own form of intellectual laziness.

Reading about AI — its architecture, its training methodology, its capabilities, its failure modes, its philosophical implications — is valuable. It is not nothing. A person who has read deeply in the literature is better equipped than a person who has not, in the same way that a person who has read every book written about swimming is better equipped than a person who has not, right up to the moment they enter the water.

The water is different from the book about the water.

What happens when you actually work with AI — not use it, not prompt it occasionally for a draft email or a recipe, but work with it, across domains, at volume, over sustained time, with genuine intellectual stakes — is that you begin to encounter its actual nature rather than its described nature. And its actual nature is strange in ways that the literature, which was mostly written by people who also have not done the work, consistently fails to capture.

You learn where it reasons and where it pattern-matches and why the difference matters enormously in practice and is nearly invisible on the surface. You learn what it means for a system to be confidently wrong — not occasionally, not randomly, but in structured, predictable ways that map onto the architecture if you know what you are looking at. You learn that the quality of your thinking determines the quality of its output far more directly than any prompt engineering guide will tell you, because the system is a mirror of cognitive precision and cognitive vagueness in ways that are humbling if you are honest about what you see reflected back. You learn that working across domains is not a luxury or an intellectual affectation — it is the only way to triangulate what the system actually is, because any single domain gives you a distorted picture, like trying to understand an elephant by examining only its ear.

None of this is in the papers. None of it is in the policy frameworks. None of it is in the conference presentations by people who have, as their primary credential for speaking about AI, the fact that they began speaking about AI early enough that no one yet knew enough to challenge them.

Five hundred thousand words is not an arbitrary number. It is a threshold — approximate, but real — below which you are still in the phase where the system surprises you in ways that feel like revelations but are actually just unfamiliarity. Below that threshold you are still mapping the coastline. You do not yet know whether what you are looking at is an island or a continent. You do not yet have the volume of cross-domain evidence that allows you to start seeing the structural patterns — the places where the system’s behaviour is consistent across contexts, the places where it diverges, the places where the divergence itself tells you something about the underlying architecture that no technical paper has quite captured because the technical paper was written by someone studying the system from outside rather than working with it from inside.

Cross-domain is the other non-negotiable. A person who has written five hundred thousand words of legal analysis with AI knows a great deal about AI and legal analysis. They do not know AI. They know one facet of a system that is, at its core, a model of human knowledge in its entirety — which means that any single domain is a sample too small and too coherent to reveal the system’s actual contours. You need the friction between domains. You need to watch how it handles the move from technical precision to emotional nuance to historical interpretation to creative generation to philosophical abstraction. The transitions are where the nature of the thing reveals itself. The transitions are what the single-domain expert has never seen.

The global commentary on AI currently has the structure of a conversation about a country that most of the participants have never visited. There are the travel writers who went once on a press trip and came back with confident opinions. There are the academics who have read every history of the country and speak its language from textbooks. There are the policy analysts who have studied its economic statistics and can tell you its GDP to three decimal places. And then, rarely, there are the people who have actually lived there — who have navigated its bureaucracies in real time, who have had conversations in its vernacular that no phrasebook prepared them for, who have been wrong about it repeatedly and revised their understanding accordingly, and who speak about it with a combination of specificity and humility that is the unmistakable signature of earned knowledge.

The lived experience is not automatically superior in every dimension. The travel writer noticed things the resident stopped seeing. The academic has context the resident lacks. These contributions are real. But on the question of what it is actually like — on the question of the system’s nature as experienced from inside a genuine working relationship with it — the gap between the visitor and the resident is not closeable by reading more papers.

What concerns me — what should concern anyone who cares about how humanity navigates the next decade — is that the visitor class is setting the policy, writing the frameworks, training the teachers, advising the governments, and testifying before the committees. And the resident class, which is smaller and less institutionally legible and does not tend to produce the kind of output that generates conference invitations, is largely not in the room.

There is a deeper issue underneath the credentialism problem, which is the issue of what kind of knowledge AI actually requires to understand well.

AI, as a working reality rather than a technical specification, is fundamentally an epistemological instrument. It does not just perform tasks. It reflects back, with extraordinary fidelity, the quality of the thinking brought to it. It amplifies clarity and it amplifies confusion in equal measure, which means that working with it seriously is, among other things, a continuous confrontation with the quality of your own cognition. This is uncomfortable. It is supposed to be uncomfortable. The discomfort is information.

The person who understands this — who has sat with it long enough to feel it, to watch their own assumptions get reflected back in distorted form, to learn the discipline of precision that the system demands and rewards — has access to a form of self-knowledge that is, incidentally, also the prerequisite for understanding what the system is doing to human cognition at scale. You cannot understand what AI is doing to how people think if you have not watched it do things to how you think. The self-experiment is not optional. It is the methodology.

The global conversation about AI’s impact on education, on democracy, on epistemology, on truth, is being conducted almost entirely by people who have exempted themselves from this experiment. Who speak about what AI does to cognition from a position of studied distance, as though the act of studying the effect exempted them from experiencing it. It does not. The distance is itself a distortion. And the frameworks built from that distance have a particular quality that anyone who has done the work can recognise immediately: they are structurally correct and experientially hollow. They describe the elephant accurately from the outside. They have never felt its weight.

I am not arguing that volume of output is wisdom. I am not arguing that the person who has generated the most words with AI is the most qualified to speak about it. I am arguing something more specific: that below a certain threshold of sustained, cross-domain, high-stakes collaborative work with the system, a person is still in the phase of having opinions about AI rather than knowing AI — and that the difference between those two phases is not a matter of intelligence, credentialism, or good faith. It is a matter of irreducible experiential contact with the actual thing.

The world needs more people in the second phase. It needs them in policy rooms. It needs them in curriculum design. It needs them in the philosophical conversations about what this technology is doing to the species. It needs them writing the frameworks that will govern how the next generation encounters the most transformative cognitive tool in human history.

What it does not need more of is the confident, well-credentialled, institutionally-endorsed, peer-reviewed, conference-presented opinion of people who have read everything about the water and have not yet learned to swim.

Get in the water. Write the words. Cross the domains. Do it until the system surprises you less often not because it has become predictable but because you have become, slowly and with difficulty and through a thousand small corrections, genuinely fluent.

Then come back and tell us what you found.

Until then — with respect, with appreciation for the genuine contributions of good-faith scholarship, and without apology — you don’t know what you’re talking about.

The Vertical Dispatch is published by Glen Roberts, writing as The Architect. The framework of Universal Dynamics underlies the structural reading of civilisational, technological, and geopolitical force in this and all Dispatch analyses.

Hashtags:

#TheVerticalDispatch #TheArchitect #UniversalDynamics #AILiteracy #EarnedKnowledge #500000Words #CrossDomain #WorkingWithAI #NotJustReadingAboutAI #AIUnderstanding #CognitiveFluency #TheWaterIsNotTheBook #AIPolicy #AIEducation #EpistemologyOfAI #AICredentialism #DeepWork #AIAndCognition #KnowledgeVsOpinion #AIExperience #ThinkingWithAI #AIAndHumanMind #GenerativeAI #AIFrameworks #WhoIsInTheRoom #PolicyAndPractice #AIInsight #IntellectualHonesty #DoTheWork #EarnedIntuition