Pedagogy in the Age of AI ·

The Map Is Not the Territory. The Word Is Not the Thing. And Your Child Is Being Taught to Forget the Difference.

Pedagogy is one of those words that academia has successfully drained of urgency. It sounds administrative. It sounds like something that happens in faculties of education between people in lanyards. It is, in fact, the most consequential question any civilisation can ask itself: how do we transmit what it means to be human to the next generation? Everything else — the technology, the curriculum, the platforms, the policy — is downstream of the answer to that question.

The answer, globally, is currently in crisis. And the crisis predates artificial intelligence, which is precisely what makes the AI question so dangerous. We are not a healthy system encountering a new challenge. We are a compromised system being hit by a structural disruption of extraordinary magnitude, without the intellectual foundations to absorb it.

Here is what is happening in classrooms worldwide, described without the reassuring language of the ed-tech industry.

Reading scores were already sliding to their lowest levels in decades before the pandemic — and educators identified the causes as increased screen time, shortened attention spans, and a decline in reading longer-form writing. Then the pandemic demolished two years of foundational development for hundreds of millions of children. Then generative AI arrived and handed every student on earth a machine that would do their reading, their writing, and their thinking on demand. In the UK, only 18.7% of children aged 8 to 18 say they read something for pleasure daily — the lowest figure since records began. 59% of UK children aged 7 to 17 already have AI in their hands. The trajectory is not ambiguous.

The OECD’s Digital Education Outlook 2026 puts it with institutional precision: if AI is designed or used without pedagogical guidance, outsourcing tasks to it simply enhances performance with no real learning gains. Performance without learning. Output without cognition. The appearance of education without the substance of it. This is the condition the global teaching system is now managing at scale, and most of it does not yet have the conceptual vocabulary to name what is going wrong.

63% of American teenagers are using AI tools like ChatGPT for schoolwork. Only 30% of their teachers report feeling confident using the same tools. The adults responsible for guiding the next generation through the most significant cognitive disruption in the history of mass education are, by a substantial majority, less fluent in the technology than the students they are supposed to be guiding. Only 25% of teachers received any AI training during the rapid adoption period of 2023 to 2024. We have handed children a tool we do not ourselves understand and told them to learn.

This is not a technology problem. It is a pedagogy problem. And the pedagogy problem is, at its root, a philosophy problem — specifically, the near-total disappearance from mainstream education of the most important distinction in the history of human thought.

The symbol is not the referent.

This is not an obscure academic proposition. It is the foundational insight of semiotics, of linguistics, of cognitive science, of philosophy of mind, and of every serious tradition of wisdom education that has ever existed. Ferdinand de Saussure identified it at the turn of the twentieth century. Alfred Korzybski built an entire system of thought around it, captured in his famous formulation: the map is not the territory. Ludwig Wittgenstein spent his career mapping the ways language misleads us about the nature of reality. Every major religious tradition has a version of it: the finger pointing at the moon is not the moon.

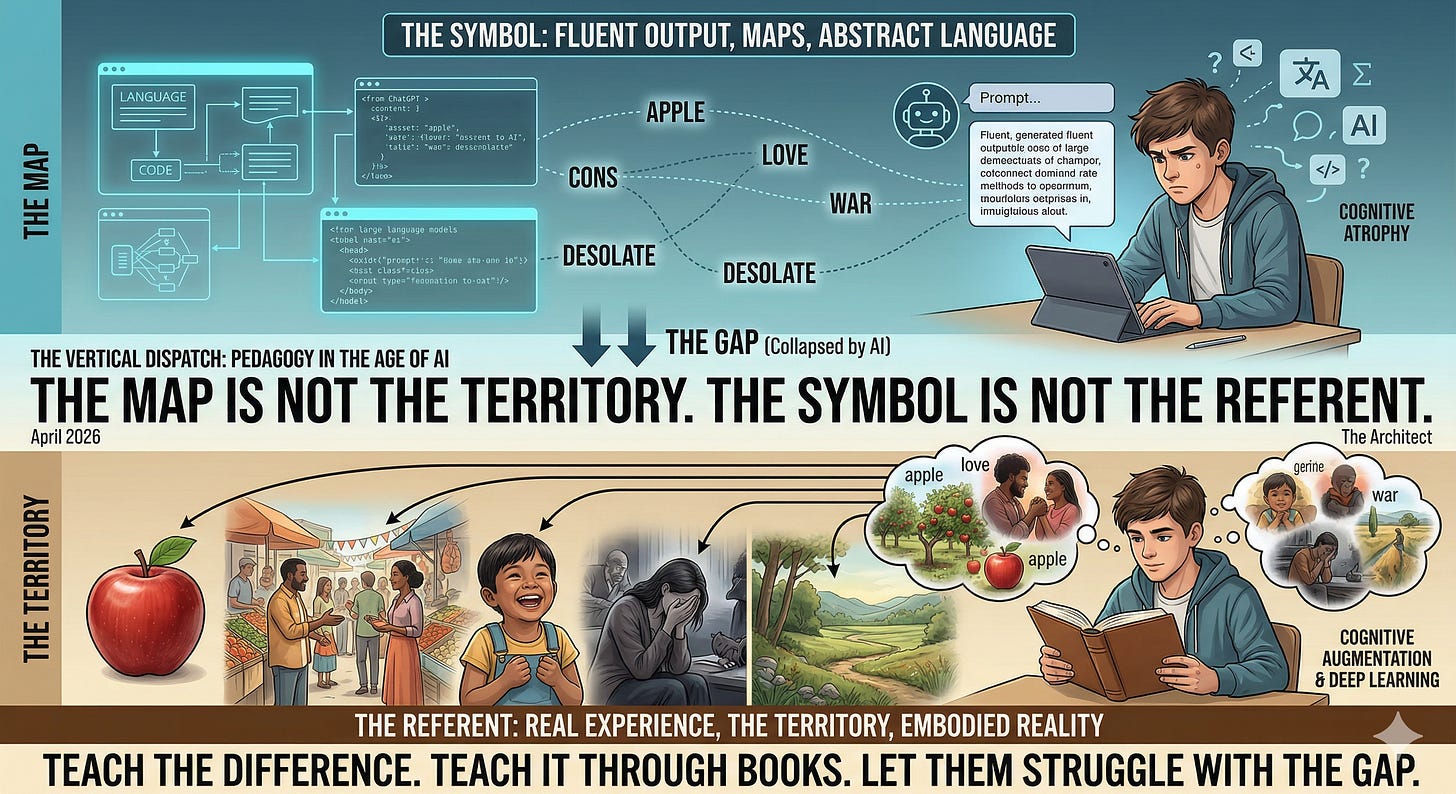

What does it mean in plain terms? A word is a symbol. The thing the word refers to — the object, the experience, the sensation, the relationship, the grief, the joy — is the referent. The word “apple” is not an apple. The word “love” is not love. The word “war” is not war. Between the symbol and the referent there is always a gap, and the entire project of genuine education is to train a human being to navigate that gap with precision, humility, and depth.

A child who reads learns this gap. Not because anyone explains it to them — though the best teachers do — but because reading forces the encounter. You read the word “desolate” and at first it is just a shape, just a sound. You encounter it in context, again and again, in different sentences, different emotional registers, different narrative situations. Gradually — through friction, through confusion, through the slow accumulation of experiential association — the symbol acquires depth. It begins to point at something real. The child has built a connection between the symbol and the referent through their own cognitive labour. That connection is theirs. It lives in them. It is not retrievable by a search engine because it was never stored in a database. It was grown.

This is what reading does that no other technology replicates. It is not information transfer. It is the construction of a self that has the internal architecture to inhabit language rather than merely consume it.

What AI does, in the educational context as currently deployed, is collapse the gap between symbol and referent before the child has had the chance to feel its depth. The student types a prompt. The system returns fluent, coherent, grammatically impeccable text. The text uses the words correctly — in the technical sense that they are in the right syntactic positions and produce plausible semantic output. But the student has not done the work of grounding those words in experience. They have received symbols without referents. They have received the map without ever having walked the territory. Researchers describe this as the difference between cognitive augmentation and cognitive atrophy: tools that handle lower-order tasks to free up mental resources for higher-order thinking on one end, and tools that lead to the weakening of skills through disuse on the other.

The atrophy is not hypothetical. A study inserted 100% AI-generated responses into undergraduate psychology exams. 94% of AI submissions were undetected by human examiners, and AI submissions outperformed real students by approximately half a grade boundary on average. What this tells us is not that AI is impressive. It tells us that our assessment systems have been measuring the symbol rather than the referent for so long that they cannot tell the difference between a student who understands something and a machine that has learned to produce the linguistic patterns associated with understanding. We built the test wrong. We have been measuring the map.

Tom Chatfield’s recent white paper on AI and pedagogy cautions against letting AI erode essential human skills — critical thinking, discernment, and domain expertise — and advocates instead for AI as a catalyst for deeper learning. It critiques defensive, surveillance-based institutional responses and calls for a shift toward transparent, mastery-based assessment. He is right about the direction. The question is whether institutions move fast enough, and whether they have the foundational philosophy to know what mastery actually means when the symbol/referent distinction has been lost.

Globally, the teaching domain is responding to this crisis in three modes, and only one of them is adequate.

The first mode is prohibition: ban AI, detect AI, punish AI use, restore the old assessment forms. This is the checkers response. It fights the last war with the last war’s weapons and will fail, because the technology is already in 59% of children’s pockets and the detection tools are demonstrably ineffective.

The second mode is integration without philosophy: embrace AI, use it for lesson planning, adaptive tutoring, personalised feedback, administrative efficiency, and call the result innovation. The OECD notes this clearly — GenAI can improve learning gains if used with a clear pedagogical purpose, but without that purpose it simply outsources cognitive effort. This mode is well-intentioned and often administratively effective. It is also philosophically empty, because it never asks what education is for. It optimises the tool without defining the destination.

The third mode — rare, difficult, and urgently necessary — is philosophical reconstruction: returning to first principles about what a human mind is, what reading does to it, what the symbol/referent distinction means for cognitive development, and then designing AI integration around those principles rather than around the capabilities of the technology. Recent research confirms that technical competence is insufficient for AI adoption in education. What is required is a critical AI literacy that integrates ethics and pedagogy, restoring decision-making authority to the teacher and ensuring fair, humanising assessment.

This is the work. It is not glamorous. It does not generate venture capital. It will not be announced at a TechCrunch conference. It requires educators, philosophers, cognitive scientists, and parents to sit together and ask the hardest question: what are we trying to grow in a child’s mind, and how do we protect the conditions that make that growth possible?

The answer, I am arguing, begins with reading. Real reading. Sustained, difficult, solitary, screen-free encounter with text that makes demands — that uses words the child does not yet know, that describes experiences the child has not yet had, that forces the construction of internal models of other people’s interiority. This is not nostalgia. This is neuroscience. The reading brain is a different brain from the non-reading brain — more connected, more flexible, more capable of the kind of deep analogical reasoning that is, incidentally, the one cognitive capacity that AI cannot replicate and will not replicate, because it is grounded in embodied human experience.

A child who cannot read deeply cannot think clearly. A child who cannot think clearly cannot tell the symbol from the referent. A child who cannot tell the symbol from the referent is, in the age of generative AI — which produces nothing but symbols, at extraordinary velocity and fluency, with zero referential grounding — entirely defenceless against the most powerful manipulation technology ever built.

We are not talking about literacy as a basic skill. We are talking about literacy as the foundational architecture of a mind capable of living consciously in the world that is coming. Every other pedagogical question — how to use AI, what to assess, how to train teachers, how to structure curriculum — is downstream of whether we have produced human beings who know the difference between the word and the thing.

The word is not the thing. The map is not the territory. The output is not the thought.

Teach that first. Teach it early. Teach it through the only technology that has ever reliably built that capacity in a human mind at scale.

Give them books. Make them read. Let them struggle with the gap between the symbol and what it points at. That struggle is not a problem to be solved by a more efficient tool. It is the education.

The Vertical Dispatch is published by Glen Roberts, writing as The Architect. The framework of Universal Dynamics underlies the structural reading of geopolitical and civilisational force in this and all Dispatch analyses.

Here is your hashtag paragraph:

#Pedagogy #AIinEducation #SymbolAndReferent #TheMapIsNotTheTerritory #LiteracyCrisis #ReadingMatters #TeachThemToRead #CriticalThinking #HumanMind #EducationReform #AILiteracy #DeepReading #CognitiveDevelopment #ChildhoodLiteracy #SchoolsWorldwide #TeacherTraining #OECDEducation #GenerativeAI #LearningNotOutput #MindBuilding #TheVerticalDispatch #TheArchitect #UniversalDynamics #FoundationalSkills #EduTwitter #FutureOfEducation #WhatSchoolIsFor #SemioticsMatters #Korzybski #ReadingBrain #AIandChildren #ScreenTime #BooksMatter #ThinkDeeply #CognitionNotCompletion