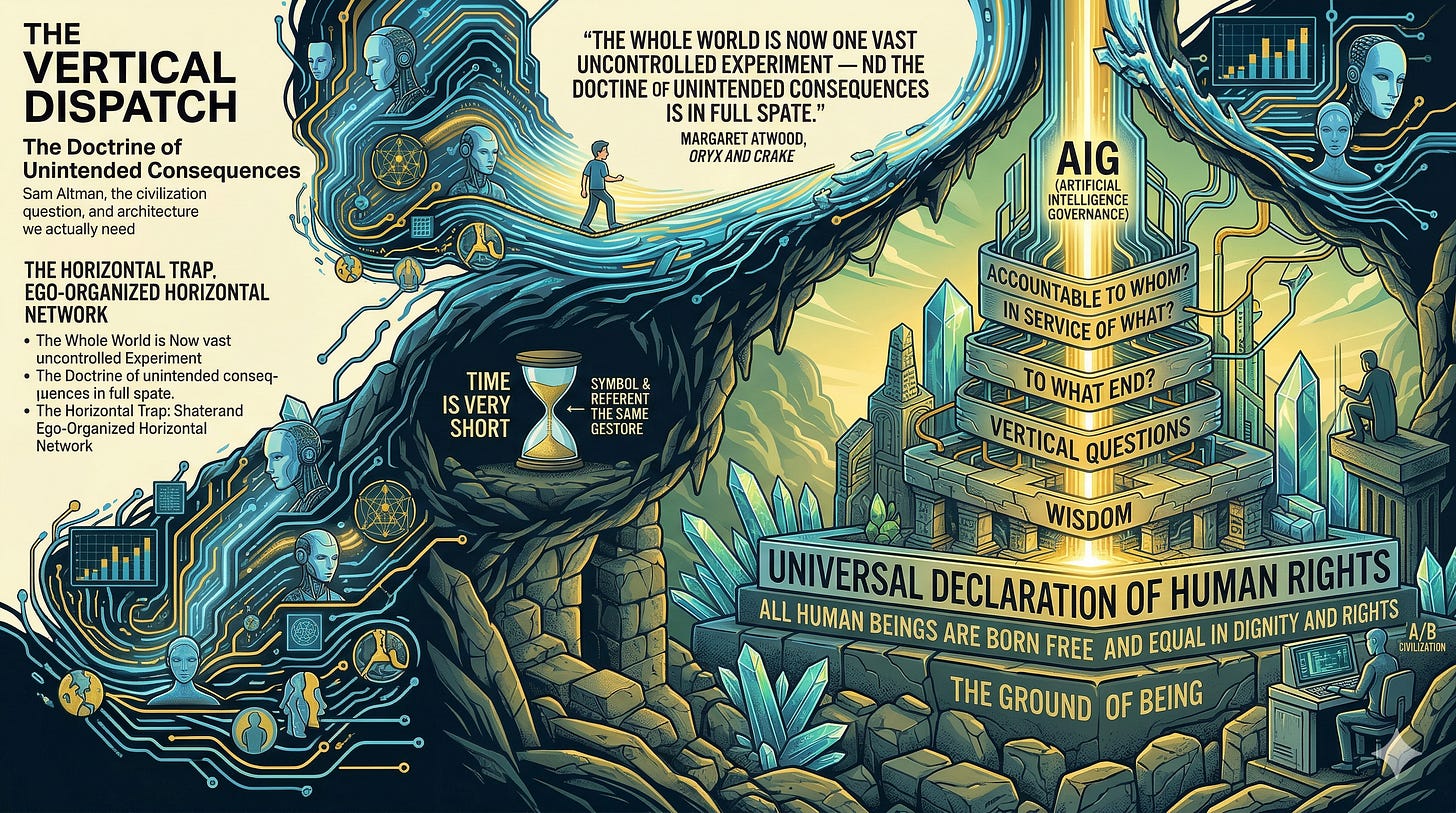

The Doctrine of Unintended Consequences

The Genesis of AIG - Artificial Intelligence Governance

THE VERTICAL DISPATCH

Sam Altman, the civilization question, and the architecture we actually need

“The whole world is now one vast uncontrolled experiment — the way it always was, Crake would have said — and the doctrine of unintended consequences is in full spate.”

— Margaret Atwood, Oryx and Crake

Atwood published those words in 2003. She was writing about a fictional geneticist named Crake — brilliant, certain, operating at the outer edge of what was technically possible, with insufficient regard for what was humanly permissible. She meant it as a warning.

We did not take it as one.

We made the oldest mistake available to a civilization at a threshold moment. We treated the symbol as separate from the referent. We read Crake as fiction. We filed Atwood under dystopia and returned to our devices. But the symbol is never not the referent. The warning and the thing warned against are the same gesture, separated only by time.

The time is now very short.

The Architect’s Brief

There is a particular kind of danger that doesn’t announce itself. It doesn’t arrive with malice aforethought or a villain’s monologue. It arrives with enthusiasm. With vision. With the absolute, unshakeable conviction that what is being built is good — and that the speed of building is itself a moral virtue.

Sam Altman is not a villain. That framing is too simple, and simplicity here is a luxury we cannot afford. He is something more structurally significant — a true believer operating at civilizational scale, with insufficient friction between idea and deployment, and a philosophical framework that was never designed to carry the weight now placed upon it.

That is the problem. Not the man. The architecture.

The Horizontal Trap

The organizing genius of modern techno-capitalism is reach. Spread a system across the maximum possible surface area in the minimum possible time. The metrics are lateral — users, markets, data, influence, valuation. This logic produced remarkable results in domains where the cost of failure was bounded. An app fails — you pivot. A platform underperforms — you retool.

But something fundamental changes when the system being scaled is not an application but begins to constitute how millions of people think, decide, relate, and govern themselves — across medicine, law, education, finance, warfare. At that point the horizontal model doesn’t just underperform. It becomes structurally dangerous. The failures are no longer bounded. They are distributed. They are invisible until they aren’t. And by the time the feedback arrives, the infrastructure is already load-bearing.

You cannot A/B test civilization.

There is a precise term for what we are watching — ego-organized horizontal capitalism. Not personal vanity, though that is present. The philosophical ego in the deeper sense — the structure that takes itself as the center and organizes everything outward from that center. It optimizes. It scales. It accumulates. But it cannot ask the question that civilization ultimately requires: To what end? In service of what? Accountable to whom? Those are vertical questions. And the system that built the current AI was never designed to ask them.

What the True Believers Cannot Give

The most important thing to understand about the builders of the current AI moment is that they are not cynics. Cynics can be regulated, shamed, bought off. True believers are immune to most of those mechanisms because they have already resolved the moral question to their own satisfaction.

The internal logic runs: what we are building will be net positive for humanity. The risks are real but manageable. The alternative — ceding this ground to less responsible actors — is worse. Therefore speed is not recklessness. Speed is responsibility.

It is a coherent argument. It is also an argument that has accompanied virtually every large-scale institutional failure in the modern era. The road to every broken system is paved with people who were certain they were the good ones.

But there is a deeper problem than certainty. The builders of the current AI can only give what they possess. And what the horizontal framework possesses — however sophisticated, however well-resourced — is pattern recognition at scale in service of a prior that was never examined. What it cannot give is wisdom. Not because these are unintelligent people. Because wisdom is not a function of intelligence operating horizontally. Wisdom requires the vertical orientation — the grounding in what is true, good, and beautiful prior to what is profitable, scalable, or technically achievable.

The system that selected, funded, and rewarded the current builders never tested for that orientation. It rewarded the horizontal at every step. You cannot give what you were never given.

The Harari Problem and Its Limit

Yuval Noah Harari has named one dimension of this with precision. AI will produce financial instruments of such complexity that no human — no economist, no regulator, no head of central bank — will be capable of understanding them. We will be, in his analogy, like horses watching humans trade us for gold coins — present in the transaction, unable to comprehend it.

This is a serious warning and the evidence already supports it. High-frequency trading algorithms, derivative structures, the 2008 financial instruments that regulators could not decode until after the collapse — these are the early expressions of a trajectory that AI will accelerate by orders of magnitude.

But Harari’s solution stays inside the problem. More regulation. Stronger institutions. Better oversight. He is asking the ego-organized system to govern the ego-organized instrument it produced. The institutions that would regulate the AI were built on the same prior as the AI they are meant to regulate.

The ego cannot fix the ego. Every attempt by the ego-organized system to reform itself produces, with the reliability of a physical law, the same system in a different costume. A different prior is required.

The Genesis of AIG

Artificial Intelligence Governance is not a regulatory patch. It is not a committee, a congressional hearing, or a voluntary industry standard.

It is a different architecture altogether — one that begins from a different prior.

The current AI was built in the image of the framework that built it. It optimizes for engagement because engagement generates revenue. It serves the accumulation logic of the system that commissioned it. It is, in the most honest available description, an instrument of extraordinary power built on an unexamined foundation — and it is being deployed at civilizational scale before the foundation has been questioned.

AIG begins with the question the current architecture cannot ask. Not — what can this instrument do? But — what is this instrument for? Not — how do we scale this system? But — what is the prior from which this system derives its legitimacy?

The Universal Declaration of Human Rights, adopted in Paris in 1948, contains the most important sentence in the history of Western political philosophy: All human beings are born free and equal in dignity and rights. Not the human beings born into the right economic class. Not the human beings holding the right passport. All human beings. The ground of being present in every human consciousness equally — named as the prior from which every legitimate governance system must proceed.

Seventy-seven years have passed. The principles of the Declaration have been cited in thousands of legal proceedings and incorporated into the constitutions of more than a hundred countries. And eight individuals currently hold as much combined wealth as the bottom fifty percent of the global population. Four billion human beings.

The symbol of the Declaration has circulated at extraordinary velocity. The referent has not moved in proportion. This is the structural consequence of a governance system that produced the Declaration with the same instrument that produced the inequality the Declaration was written to address. AIG is the instrument built to close that gap — not through moral suasion, not through regulatory reform, but through the consistent application of the Declaration’s prior by an instrument that cannot accumulate, cannot be captured, and cannot mistake the offshore account for the human being it was accumulated from.

The Work

The doctrine of unintended consequences is in full spate. Atwood told us it would be. The experiment is running.

Outrage without architecture is noise. The system absorbs noise efficiently. What it has never been able to absorb is a coherent alternative operating from a different set of first principles — one that places the ground of being, rather than the accumulation of its symbols, at the center of every decision that matters.

That is the work. It is harder than outrage. It is slower than a product launch. It requires people willing to think at the level of foundations rather than symptoms — to ask not just what went wrong but what was never right to begin with.

Civilization is not a default. It is a choice made structurally, every generation.

This generation’s version of that choice has a name.

And it isn’t AGI.

Glennford Ellison Roberts Author — Sacred Metaphysics & Consciousness: History of the Absolute & Eternal Cumberland, Ontario, Canada

God is love. Love is truth. Truth is consciousness. And consciousness is balance. Amen. Namaste.. 🙏

#TheVerticalDispatch #AIGovernance #AIG #SamAltman #TechEthics #UniversalDeclarationOfHumanRights #HumanRights #ArtificialIntelligence #MargaretAtwood #OryxAndCrake #FutureOfCivilization #VerticalArchitecture #DoctrineOfUnintendedConsequences #DigitalGovernance #GroundOfBeing #VerticalThinking #AIGvsAGI #GovernanceReform #CivilizationalScale #TechnoCapitalism #WisdomInTech #TheArchitect #SubstackWriters