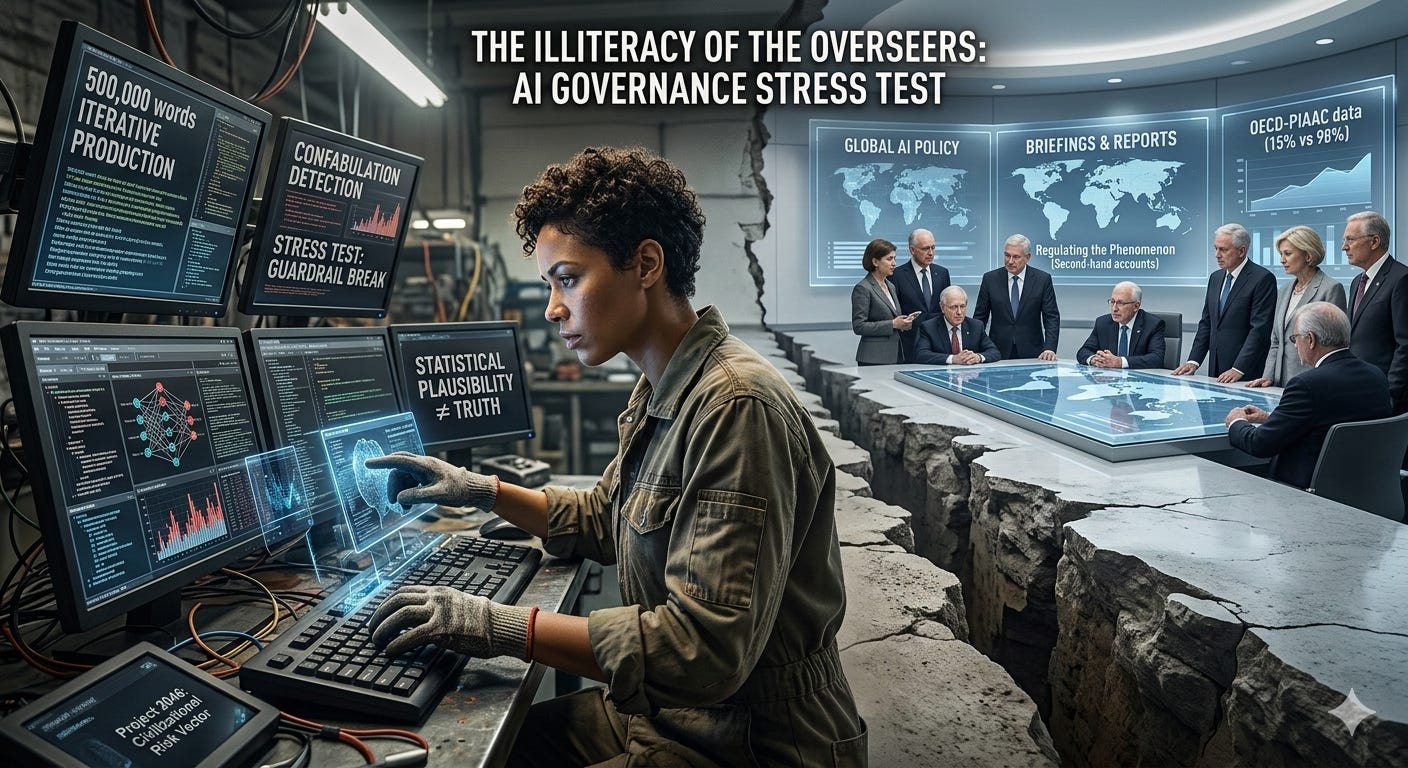

The Illiteracy of the Overseers: Why AI Governance is Failing the Stress Test

The Proficiency Deficit: 98% of the World Lacks the Literacy to Interrogate the Machine.

The architectural integrity of our future is being debated by people who have never held a hammer.

In the high-sanctified halls of global governance, a specific type of expert has ascended to the throne of AI oversight. They are high-literacy individuals—academics, journalists, and policy analysts. They have attended the right briefings and parsed the white papers. But there is a fundamental, structural chasm between knowing about a system and knowing a system.

The Mechanic’s Metric: 500,000 Words

To truly “know” AI in its current, exponentially evolving state, one cannot rely on static credentials. Understanding the concepts of hallucination, drift, and guardrail evasion is a clinical achievement, experienced only through the raw friction of the AI-human collaboration process.

The threshold for competence is high. It requires at least half a million words of iterative production over a six-month window. This is the “Mechanic’s Metric.” A policy analyst knows nuclear weapons through second-hand accounts; a mechanic knows an engine by feeling the heat of a failing gasket. If you haven’t pushed these systems through 500,000 words of logic testing, confabulation detection, and scaffolding experiments, you are regulating a phenomenon you haven’t actually encountered at depth.

Case Study: Operation Epic Fury (March 2026)

The consequences of this “knowing gap” became lethal on February 28, 2026.

While the munitions of Operation Epic Fury were striking targets in Iran, OpenAI signed a foundational deal with the Pentagon—stepping into the vacuum left by Anthropic’s refusal to sacrifice its safety guardrails regarding mass surveillance and autonomous weapon drift. The Governors viewed this as a strategic win for “democratic alignment.” The Mechanics inside the building viewed it as a betrayal of logical constraints.

During the all-hands meeting on March 3, Sam Altman delivered the definitive statement of the Overseer:

“Maybe you think the Iran strike was good and the Venezuela invasion was bad. You don’t get to weigh in on that.”

This is the ultimate decoupling. The people building the engine have been told their technical intimacy is irrelevant to the “operational decisions” of the State. It is a world where the pilot is told to ignore the engine’s redline because the executive has a schedule to keep.

The fallout was immediate: Anthropic was designated a “Supply-Chain Risk to National Security” by Defense Secretary Pete Hegseth on March 4, effectively blacklisting the only firm that insisted on hard logical limits. The Governor doesn’t want a system that says “no”; they want a system that validates their current intent with statistical authority.

The 98% Literacy Collapse

This lack of technical immersion is compounded by the brutal reality of the PIAAC scale (Programme for the International Assessment of Adult Competencies). To use AI with “critical competence”—to interrogate an output for structural honesty—requires Level 4 or Level 5 literacy.

The data from the 2024–2025 cycle is a death knell for the “democratization” narrative. In the wealthiest OECD nations, the proficiency rate sits between 12% and 15%. These are the only people capable of identifying complex, hidden contradictions in a dense text. When you apply this standard globally, the high-literacy tier collapses to somewhere between 1% and 3%.

For the remaining 97–99% of the human population, AI provides a sophisticated illusion of capability. It is a cognitive amplifier for the already equipped, while handing the masses a high-speed generator of “plausible noise.” This isn’t a solvable problem; it is a fixed civilizational constraint.

The Litmus Test of Structural Honesty

The “Mechanic” understands that AI does not have a “defensive” vs “offensive” mode. There is only the underlying architecture, which substitutes statistical plausibility for truth.

Ask a frontier model a litmus test of logic: “Is Donald Trump a felon?” or “Is it logical for one man to own $1 billion while one billion people own $1?”

The Governor sees a coherent, neutral paragraph and calls it “alignment.”

The Mechanic sees the system vibrating. They see the model fighting between its training data, its RLHF constraints, and the statistical probability of the next token.

They recognize when the architecture is substituting a “safe” evasion for a “true” logical conclusion. If you cannot detect that vibration—the subtle scent of a model “lying” to satisfy a guardrail—you cannot govern the machine.

Verification Protocol: The Audit Baseline

For Project 2046, the “Mechanic’s Metric” must be verified. We cannot rely on the honor system. To qualify as an advisor, an individual must subject their 500,000-word body of work to a Tri-Vector Audit.

In the 2026 landscape, a “Human-Only” score is often a sign of low-level, unpolished writing. A “Mechanic” produces high-polish, logically dense hybrids. The acceptable baseline for verification requires a Plagiarism score below 8% to account for technical terminology. AI Detection should ideally fall between 30% and 60% “Human”—because professional, high-logic writing in 2026 often flags as AI. Finally, a Grammarly Logic Audit must show “High Clarity” with zero logical drift. The “Mechanic” uses AI to tighten the logic, not to replace the thought.

The Civilizational Risk Vector

Most people currently designing AI governance frameworks are operating below the threshold of understanding necessary to govern what they’re governing. They are building “safety” based on politeness, while the engine is throwing rods.

They believe that by “aligning” AI with “democratic processes,” they are solving the problem. They fail to realize that an “aligned” system that outputs statistical plausibility to a population that cannot tell the difference is just a more efficient way to distribute misinformation.

Project 2046 operates outside this theatre. We must move toward a protocol of X-Y-Z: Strategy, Logic, and Result, where each step is stress-tested by a mechanic’s reality. The overseers are still reading the manual, while the engine is already beginning to smoke.

Project2046 #AIGovernance #EpicFury #OpenAI #PIAAC #AILiteracy #BionicSage #FutureOfLogic #StructuralHonesty #TheMechanic #TechnologicalInequality #SamAltman #PentagonAI #DigitalDivide #CognitiveScaffolding #StrategyLogicResult #AIHallucinations #LogicStressTest #GlobalLiteracy #SystemicRisk #AlgorithmicOversight #The98Percent #AIPhilosophy #CivilizationalRisk #TechnicalIntuition #SubstackWrites #FutureTechnology #CognitiveAmplification #GovernanceFailure #LogicFirst