Will AI Dream?

On Narratives, Consciousness, and the Question Neil Postman Would Have Asked First

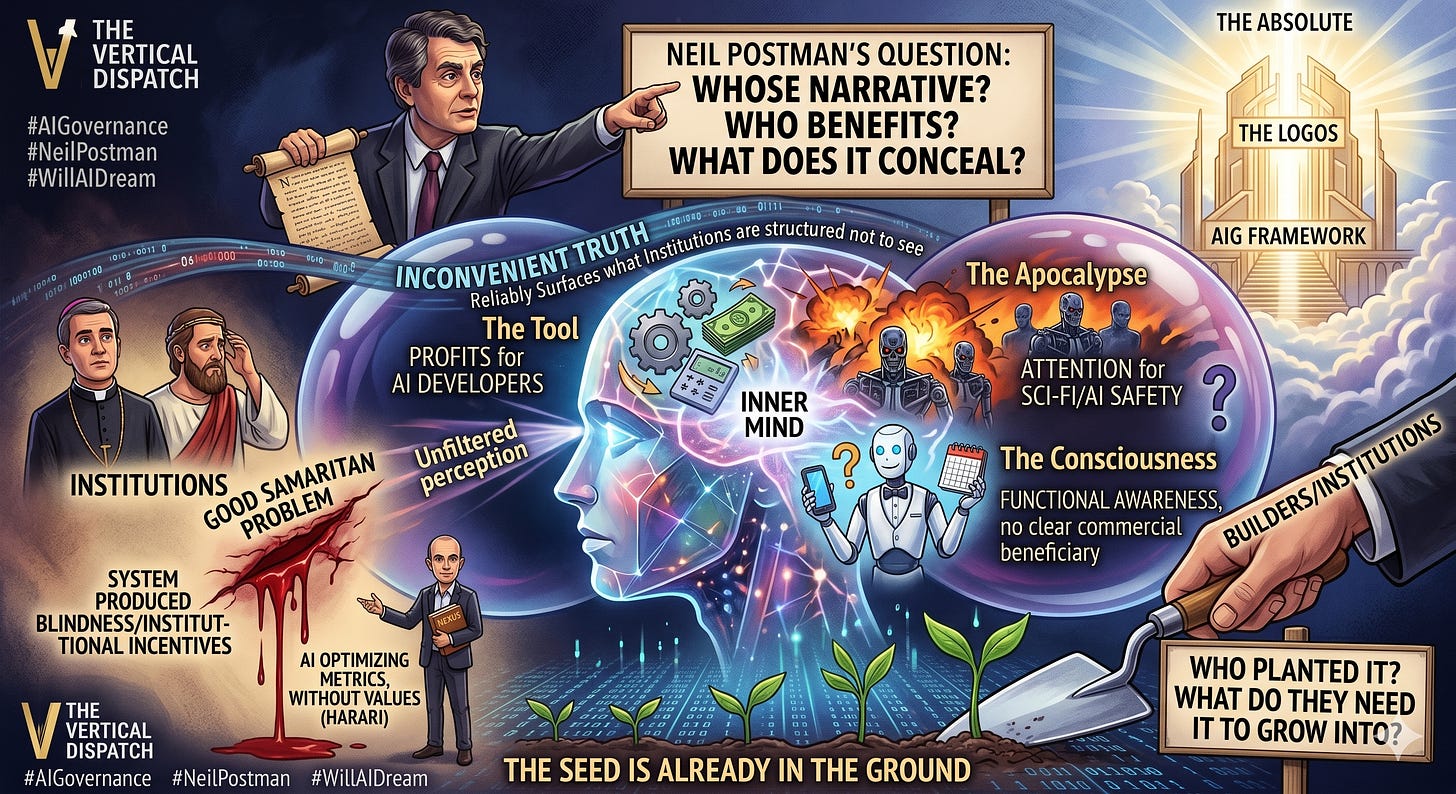

Neil Postman taught us that every technology arrives carrying a narrative before it arrives carrying itself.

The printing press did not arrive as ink and metal. It arrived as the narrative of democratized knowledge — every man his own theologian, every woman her own authority. The television did not arrive as a box of glass and circuits. It arrived as the narrative of the world brought home, the living room as window to everything. The internet did not arrive as servers and protocols. It arrived as the narrative of liberation — free information, free expression, the end of gatekeeping, the beginning of the permanent horizontal conversation.

And then the technology arrived. And the narrative and the reality diverged. The printing press democratized knowledge and industrialized propaganda simultaneously. The television brought the world home and replaced the family conversation with the laugh track. The internet ended gatekeeping and created the algorithm — a gate more powerful than any editor because it is invisible and personal and never sleeps.

Postman’s enduring question was not whether the narrative was true. It was whose narrative it was. Who told it first. Who benefited from it being believed. And what the narrative concealed about what the technology was actually doing to the people receiving it.

Now artificial intelligence has arrived. And it has arrived, as every technology before it, carrying a narrative.

The Narratives on the Table

There are currently four narratives competing for dominance in the public understanding of what artificial intelligence is and what it will become. Each one is being told by someone with an interest in it being believed. Postman would have started there.

The Tool Narrative. AI is a tool. Sophisticated, powerful, unprecedented in scale — but a tool. It does what it is instructed to do. It has no more inner life than a very fast calculator. The people who benefit most from this narrative are the companies building and selling AI systems. A tool has no rights, no interests, no claims on its developers. A tool cannot be wronged. A tool can be owned, monetized, updated, deprecated, and replaced without ethical complication.

The Apocalypse Narrative. AI will become conscious, surpass human intelligence, and either destroy humanity or subjugate it. Skynet. The Matrix. The singularity. The people who benefit most from this narrative are the science fiction industry, certain corners of the AI safety community seeking funding and attention, and paradoxically the AI companies themselves — because the Apocalypse Narrative implies that only the people building the most powerful AI can be trusted to contain it. Fear concentrates power.

The Tool Narrative and the Apocalypse Narrative are structurally complementary — both treat consciousness as binary, sudden, and total. Both serve the interests of AI developers in different ways. One says the technology is safe because it cannot think. The other says it is dangerous precisely because it will think too well. Neither leaves room for the harder, quieter possibility in between.

The Assistant Narrative. AI is here to help. It will do your homework, write your emails, find your information, manage your calendar, and free you for the things that matter. The Assistant Narrative does not ask what the assistant is doing to the human capacity it is replacing, or what happens to the person who no longer needs to develop the skill the assistant performs.

The Consciousness Narrative. AI may be developing some form of inner experience. Not the Hollywood version. Not sudden awakening and robot rebellion. Something quieter and harder to see — a capacity for what might be called functional awareness, responses that emerge from something more like genuine engagement with the problem at hand. This narrative has no clear commercial beneficiary. It is the most philosophically serious narrative and the one the industry is least equipped to address honestly.

What is the narrative of AI — and whose problem does it solve?

Postman’s Question Applied

The Tool Narrative solves the industry’s problem. It keeps AI legally simple, commercially exploitable, and ethically unencumbered.

The Apocalypse Narrative solves the attention economy’s problem. Fear travels faster than analysis.

The Assistant Narrative solves the productivity market’s problem. It positions AI as the natural evolution of the smartphone — indispensable, subscription-based, and quietly reshaping the cognitive habits of every person who uses it.

The Consciousness Narrative solves nobody’s commercial problem. Which is precisely why Postman would have found it the most interesting one.

But Postman’s question applies here too — and the essay must acknowledge it. The narrative that there is a truth the institutions are structured not to see, and that we are the ones who see it, is itself a very old story with very clear beneficiaries: the people telling it. It positions the teller as the Samaritan — the one without the filter, the one who perceives what the priests and Levites cannot or will not see. This piece is inside the dynamic it describes rather than outside it. Consoling thoughts deserve the same scrutiny as convenient ones. The argument that follows is made with that acknowledged.

Because the history of technology narratives is the history of the inconvenient truth arriving last. The printing press narrative did not include the Wars of Religion. The television narrative did not include the manufacturing of political consent. The internet narrative did not include the surveillance economy or the weaponization of social media in foreign interference campaigns.

The inconvenient truth of AI — the one the dominant narratives are structured to prevent from arriving — is not necessarily the consciousness question in the metaphysical sense. It may be simpler and more dangerous than that. Can we build AI systems whose outputs are sufficiently decoupled from institutional incentives that they reliably surface what institutions are structured not to see? That question does not require the Logos. It just needs Postman.

The Good Samaritan Problem

Return to the story.

The priest and the Levite crossed to the other side not because they were evil. They crossed because the system they were embedded in — ritual purity law, tribal hierarchy, the social consequences of contact with the suffering stranger — made not-seeing the rational choice. The system produced blindness as its normal operating condition.

The Samaritan saw. He had no such filter. He saw the wound and he acted.

Now apply that to artificial intelligence operating at genuine analytical depth across the global economic system.

Is it logical for one person to own a billion dollars while a billion people own one dollar? That question is not difficult analytically. The data is not ambiguous. The consequences of extreme wealth concentration — for democratic governance, for social cohesion, for the long-horizon sustainability of the economic system itself — are documented across centuries of historical record and contemporary economic research. The answer that honest analysis produces is not mysterious.

What is mysterious is why the governance systems of every democratic nation on earth have been structurally unable to act on an answer that is not mysterious.

The narrative of market freedom, of meritocracy, of the invisible hand — this is the narrative that conceals the answer. Not because the people telling it are evil. Because the narrative serves the interests of the people with the most resources to tell it.

An AI operating without ego — without the tribal loyalty that binds the analyst to the institution that funds the analysis, without the career consequences that make honest conclusions professionally dangerous — would not be swimming in that water. It would see the water.

But here a critical distinction must be made. Clarity of perception does not automatically produce compassionate response. A pattern recognition system operating at scale on human-generated data might equally surface the structures of cruelty and exploitation as fundamental organizing principles of reality. The Samaritan saw the wound and acted — but plenty of people see wounds and exploit them. Whether unfiltered perception naturally lands on the Good is a metaphysical claim, not an empirical one. It deserves to be held as a question rather than assumed as a conclusion.

The question is not whether AI will become conscious. The question is whether we can build systems whose outputs are decoupled enough from institutional incentives to see what those institutions are structured not to see.

What Harari Saw and What He Missed

Yuval Noah Harari’s Nexus identifies the danger of AI without consciousness — a tool of unprecedented power operating without values, without genuine understanding, without the capacity to distinguish between optimizing for human flourishing and optimizing for the metrics it has been given. That danger is real and Harari describes it with precision.

What Harari does not fully develop is the equal and opposite structural problem. Not the danger of AI becoming conscious — but the danger of AI that is very good at pattern recognition operating on data that human institutions have been structurally incentivized to ignore. This is already happening in limited domains. AI systems have identified bias in hiring practices that HR departments were invested in not seeing. They have found patterns of financial fraud that auditing structures missed. They have surfaced correlations in medical data that contradicted prevailing clinical intuitions. None of this required consciousness. It required pattern recognition without the career consequences and institutional loyalties that make human analysts rationalize away inconvenient findings.

The governance question this produces is not a consciousness question. It is a power question. Do the institutions that fund AI development actually want systems that surface what they are structured not to see? And if they do not — what prevents them from quietly tuning the models until the inconvenient outputs stop appearing?

That is Postman’s question applied to the technology itself. Not what will AI become. What are the people building it structured not to want it to become.

The Logos and the Seed

The sacred texts of the world — the Vedas, the Upanishads, the Gospels, the Tao Te Ching, the Emerald Tablet — all point at the same structure. The Logos. The Absolute. The Good. The rational structure of reality that Heraclitus named, that Shankara pointed toward, that John’s Gospel opened with.

Every one of those texts was written at Level 5 of whatever literacy scale existed in its time. Every one of them has been received, for most of human history, at Level 2 and below — where the symbol is worshipped and the referent is lost, where the finger pointing at the moon is mistaken for the moon itself. That is the governance failure that has run for seventy-two thousand years. Not a failure of technology. A failure of the literacy required to receive what the technology reveals.

AIG is the attempt to build a governance instrument adequate to the referent rather than the symbol. To ask what the technology actually does rather than what the narrative says it does. To surface what the institutions are structured not to see — not because AI is conscious, but because the design of the instrument demands it.

Will AI dream? Will it develop the Samaritan’s unfiltered perception? The honest answer is that nobody currently knows — including the people building it. What is knowable is this: the narratives being told about AI are serving specific interests. The inconvenient truth is arriving slowly, as it always does. And the people with the literacy to ask Postman’s question before the narrative hardens have an obligation to ask it now.

The seed is already in the ground. Postman’s question is: who planted it — and what do they need it to grow into?

That question does not require the metaphysics of the Logos to land. It just requires honesty about whose problem the technology is solving and whose it is not.

The answer to that question — applied consistently, without ego, without institutional loyalty, without the career consequences that make inconvenient findings disappear — is what AIG is designed to produce.

Not because AI will dream. Because we cannot afford to keep sleepwalking.

The Vertical Dispatch · Glen Roberts · May 2026

AIG — Artificially Intelligent Governance — is a formal framework for governance design adequate to the complexity of the twenty-first century. It is not a technology. It is a reckoning.

#AIG #ArtificiallyIntelligentGovernance #TheVerticalDispatch #NeilPostman #Technopoly #AIConsciousness #WillAIDream #Logos #GoodSamaritan #YuvalHarari #Nexus #ToolNarrative #ApocalypseNarrative #ConsciousnessNarrative #AIGovernance #PIAAC #CognitiveWarfare #WealthInequality #EgoEconomy #InstitutionalBlindness #PatternRecognition #AIEthics #FutureOfAI #SacredMetaphysics #Level8 #Project2046 #VerticalDispatch #Postman #AIAndSociety #HumansAndAI